The Future of Java: What Project Loom Can Do for Java Developers

By Mohammad Alorfali ·

A practical explanation of Project Loom and virtual threads—why they matter, how they improve scalability, and what they change for Java concurrency.

A simple analogy: the busy restaurant

Imagine your Java application as a busy restaurant. Traditional platform threads are like chefs who each need their own full kitchen—effective, but expensive and limited. If every incoming request needs its own “kitchen,” you hit a ceiling quickly.

Project Loom changes the model by making it cheap to run huge numbers of concurrent tasks, without forcing you into complex, callback-heavy code.

What’s special about Project Loom?

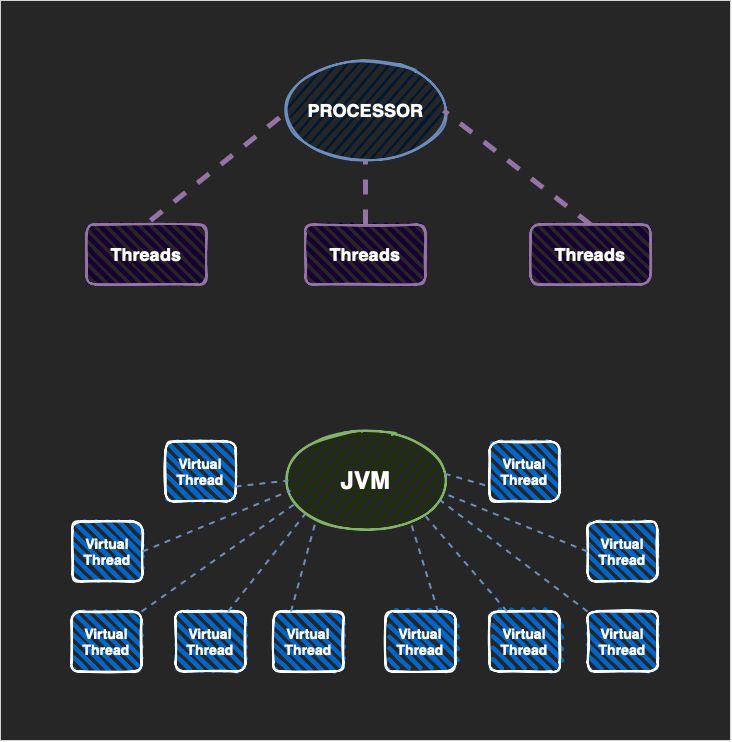

Project Loom introduces virtual threads—lightweight threads managed by the JVM. Virtual threads let you keep a familiar, readable request-per-task style while scaling to far more concurrency than traditional thread-per-request approaches.

The payoff: fewer reasons to juggle complex thread pools, fewer hard-to-debug concurrency workarounds, and cleaner code for I/O-heavy workloads.

Why it matters for Java developers

Even if you’re building high-traffic services, you want the simplest concurrency model that meets your performance goals. Loom helps you write code that’s easier to maintain while still handling growth.

If you’re exploring modern backend performance and scalability, Loom is worth tracking—especially for systems dominated by network calls, database I/O, or external service integrations.

Conclusion

Project Loom aims to make Java concurrency simpler and more scalable with virtual threads. For teams building server-side Java, it’s a big step toward writing straightforward code without sacrificing throughput and responsiveness.

Written by Mohammad Alorfali, Senior Java Engineer in Dubai.